High quality image compression is becoming essential as today’s cameras deliver higher resolutions and higher frame rates, while edge-based systems often operate under strict bandwidth limits. As a result, compression has become a key requirement for advanced image processing. The challenge is clear: how can developers reduce data throughput without jeopardizing inspection accuracy or overall image quality?

Growth of High-Resolution Sensors

A major trend in the machine vision industry has been the rise of new-generation CMOS sensors that deliver high-resolution images at high frame rates. In earlier inspection systems, cameras below one megapixel were standard, and even five megapixels was once considered a high resolution for industrial applications.

Today, 12-, 16-, 20-, or 25-megapixel sensors—such as Sony’s IMX54x family—are common across the industry. You can also find cameras with resolutions above 100 megapixels for advanced vision tasks.

Rising Bandwidth Requirements

Higher resolutions and faster frame rates naturally require higher interface bandwidth. This is why interfaces such as USB3 Vision, 5GigE, 10GigE, CoaXPress, and Camera Link have expanded rapidly in recent years. These standards deliver the bandwidth required for demanding machine vision applications.

Impact on Host Systems

As image sizes grow, host computers and processing software must handle enormous data volumes. Traditionally, machine vision systems were stand-alone stations using powerful PCs to process everything locally. Today, vision is increasingly deployed in mobile and outdoor applications that rely on embedded computers and network connectivity for cloud or edge computing. Even when sensors and interfaces manage the raw image flow efficiently, the host still faces significant bandwidth pressure. This makes efficient compression essential.

Trade-Offs of Compression

Lossless compression algorithms preserve the original image but typically achieve only about a 2:1 compression ratio. This is often insufficient for high-resolution or high-frame-rate applications. Developers face a difficult trade-off: prioritize compression ratio or maintain image quality.

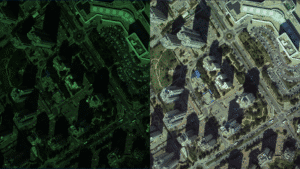

Algorithms such as MPEG can reduce image volume by factors of ten and beyond. However, they introduce visible degradation, especially around object edges. While this may be acceptable in consumer video or simple archival use, precision imaging tasks can suffer from even small quality losses. Edge artifacts, noise amplification, and fine-detail loss may all degrade final inspection or analysis results.

Generic video codecs are also designed for YUV 4:2:2 or 4:2:0 or RGB movies. They reduce color resolution by design. They also assume that high-frequency noise is acceptable—or even desirable—to enhance perceived sharpness. Many of today’s industrial applications require clean edges, reliable zooming, and stable fine-detail reconstruction. In such cases, high-frequency noise becomes a serious limitation.

Gidel’s Approach to High-Quality Compression

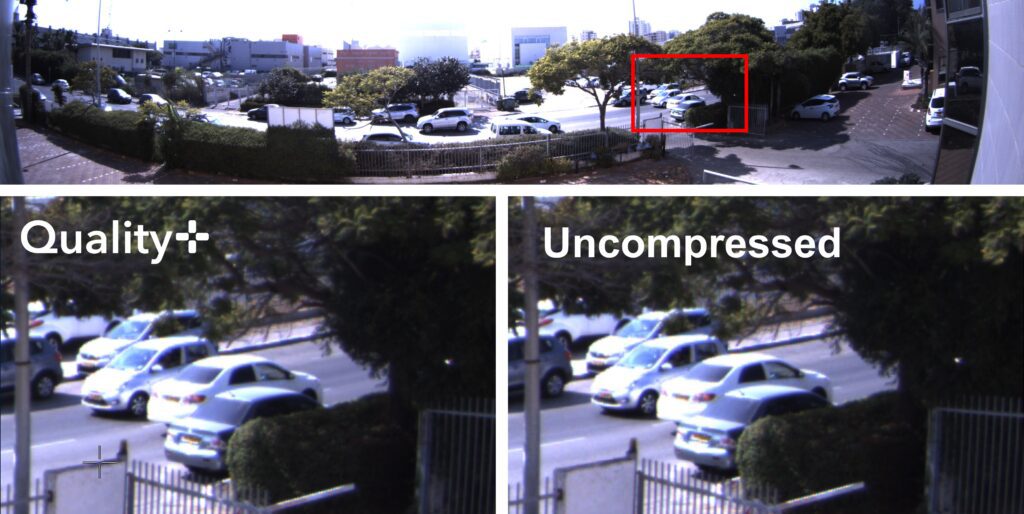

Gidel, an Israeli company specializing in FPGA development for vision applications, has addressed this challenge for more than 25 years. The company patented Gidel Imaging, a software tool that enhanced compressed images by estimating DCT values before quantization to reduce high-frequency noise during zooming. With its current Quality+ technology, Gidel eliminates or significantly reduces this noise at the source, supporting high quality image compression in demanding applications.

Today, Gidel introduces Gidel Quality+ Compression, developed to meet the needs of modern imaging systems. Quality+ provides high compression ratios while maintaining the image quality required for inspection. It can process more than one gigapixel per second per camera in real time on an FPGA with low power consumption, making it ideal for embedded computing.

Image Compression at the Acquisition Stage

Quality+ Compression supports multi-camera vision systems at full line speed. It runs on FPGAs integrated into Gidel’s high-performance frame grabbers and FantoVision embedded computers. System engineers can also build custom acquisition devices using Gidel’s FPGA modules.

Performing compression at the earliest acquisition stage reduces the load on CPU and GPU resources. It prevents bottlenecks between the frame grabber and the host processor and reduces the amount of data that must be processed, stored, or uploaded in edge-based systems. Quality+ uses minimal FPGA resources, enabling Gidel’s InfiniVision platform to grab and compress ten or more cameras running at one gigapixel per second each in real time. This supports high-speed and high-resolution imaging in systems with strict SWaP constraints.

Balancing Compression Ratio and Image Quality

To meet today’s high-resolution and high-speed imaging requirements, compression algorithms must deliver high ratios while preserving the quality required for inspection. Each application defines image quality differently, so the optimal balance requires a flexible and customizable approach.

(This article was published in German in Inspect magazine.)

Related Products

-

Quality+ Compression

Learn More -

HDR Correction

Learn More -

FantoVision20

Learn More -

FantoVision20-CL

Learn More -

FantoVision20-GigE

Learn More -

FantoVision40

Learn More -

FantoVision40-CXP12

Learn More -

FDB Modules

Learn More -

InfiniVision

Learn More -

JPEG Compression

Learn More -

Lossless Compression

Learn More -

Proc10M

Learn More -

Proc10N

Learn More -

HawkEye-20GigE

Learn More -

Proc10A-40GigE

Learn More -

Proc1C10M-120GigE

Learn More -

Proc1C10N-120GigE

Learn More -

HawkEye-CL

Learn More -

HawkEye-CXP12

Learn More -

Proc10A-CXP

Learn More -

Proc1C10N-CXP12

Learn More