This FPGA vs GPU interview features Gidel’s Founder & CTO, Reuven Weintraub, speaking at The Next FPGA Platform conference in San Jose.

He elaborates why FPGAs are regaining momentum in high-performance computing, and how modern workloads benefit from FPGA architectures where GPUs face bottlenecks.

FPGA vs GPU Interview – Key Insights from the Conference

During the session, Mr. Weintraub emphasized that the traditional view of FPGAs as “hard to program” is rapidly changing. With improved toolchains, modular IP libraries, and higher-level development frameworks, FPGAs are becoming far more accessible. This shift allows developers to reach performance levels that GPUs often cannot achieve due to architectural constraints.

One major topic discussed was memory bandwidth. GPUs achieve high throughput, but they are often limited by fixed memory hierarchies. FPGAs, on the other hand, allow developers to build data pipelines tailored to the exact processing flow. As a result, they eliminate unused cycles, reduce latency, and handle non-uniform or sparse workloads efficiently.

Where FPGAs Outperform GPUs

- Streaming and real-time processing – FPGAs process data as it arrives, without batching or queuing delays.

- Deterministic latency – Ideal for systems where GPUs cannot guarantee consistent timing.

- Vector processing and custom compute engines – The architecture adapts to the workload rather than forcing the workload to adapt to the architecture.

- Lower power consumption – FPGAs often deliver superior performance per watt in AI inference, vision, and signal processing.

- Fine-grained parallelism – Enables massive concurrency tailored to the real-time dataflow.

Gidel’s Founder and CTO also explained that many modern applications—such as cybersecurity, video analytics, radar, and real-time AI—require predictable performance. FPGAs supply this determinism naturally, while GPUs rely on shared compute and memory resources that introduce jitter and latency.

FPGA + GPU: Why the Future Isn’t Either–Or

While the interview highlights the shifting balance between FPGAs and GPUs, Gidel’s CTO emphasizes that the strongest systems do not choose between them. Instead, the real advantage comes from combining both technologies in the same architecture. The GPU excels at AI inference, analytics, and massively parallel workloads, while the FPGA delivers deterministic capture, high-bandwidth I/O, pre-processing, and sensor-level logic.

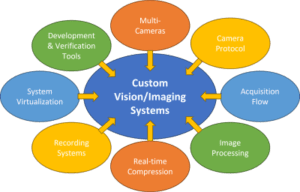

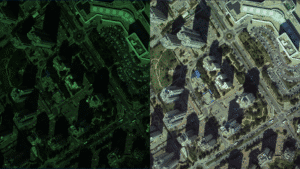

A major benefit of the FPGA is the ability to build custom hardware algorithms that run at wire speed. These include HDR pipelines, debayering, noise reduction, quality enhancement, region-of-interest extraction, timestamping, multi-stream alignment, real-time compression, and even neural-network pre-processing. Because these operations run directly on the FPGA fabric, they offload the CPU and GPU entirely, reducing power, bandwidth, and latency.

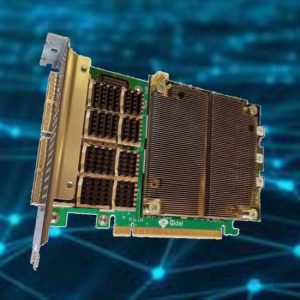

This hybrid approach is exactly what powers Gidel’s FantoVision embedded computers. Each unit combines an NVIDIA Jetson GPU with a Gidel Arria-10 FPGA, forming a unified edge platform for real-time imaging. In this setup, the FPGA handles 20–40 Gb/s acquisition, low-latency triggering, camera control, on-FPGA compression, and advanced pre-processing, while the GPU focuses on AI models, analytics, and application-level logic.

Accelerating FPGA Development with ProcVision

To simplify and accelerate FPGA development, Gidel provides the ProcVision Suite, a modular vision SDK designed for imaging and high-speed acquisition systems. ProcVision enables developers to build fully customized acquisition and processing flows using Gidel’s InfiniVision and ProcFG architectures. It supports inline ISP, HDR, debayering, noise reduction, and on-FPGA compression engines such as Quality+, Lossless, and JPEG.

Developers can insert their own proprietary algorithms directly into the FPGA pipeline, enabling real-time preprocessing and significant offloading of CPU and GPU resources. The suite includes the CertifEye validation environment, which streamlines testing and verification of custom IP. By using ProcVision, teams can deploy FPGA-accelerated imaging pipelines much faster and more reliably than with traditional FPGA flows.

Scaling to 100+ Cameras with InfiniVision

Gidel’s approach scales even further through InfiniVision, the company’s open-FPGA acquisition and synchronization framework. InfiniVision enables distributed imaging systems that can capture, stream, and precisely synchronize 100+ cameras across multiple FantoVision units—maintaining deterministic timing and real-time performance. This level of synchronization is extremely difficult to achieve with GPU-only or software-driven architectures.

By combining FPGA determinism, GPU flexibility, ProcVision’s accelerated development flow, and InfiniVision’s large-scale synchronization, Gidel demonstrates that the future of computing is not FPGA versus GPU but FPGA + GPU working together—delivering higher throughput, lower latency, and more reliable system behavior than either technology could achieve alone.

Read the Full FPGA vs GPU Interview

You can read the full interview on The Next Platform: FPGA vs GPU – Time for a Compute Rematch

Related Products

-

FantoVision20

Learn More -

SkyBoost-RT

Learn More -

FantoVision20-CL

Learn More -

FantoVision20-GigE

Learn More -

FantoVision40-CXP12

Learn More -

SkyBoost

Learn More -

FantoVision40

Learn More -

HDR Correction

Learn More -

ProcVision Suite

Learn More -

Lossless Compression

Learn More -

InfiniVision

Learn More -

ProcFG

Learn More -

Quality+ Compression

Learn More -

Proc1C10N-120GigE

Learn More -

Proc10N

Learn More -

JPEG Compression

Learn More